Figure 1: Hypotheses Consistent with Observations

By definition, a hypothesis stands in contrast to an assertion, as follows:

| We are more familiar with assertions. An example is the statement, “It’s raining outside” or equivalently “I assert that it’s raining outside.” When you assert something, you are saying you believe it to be true, and that other people should be able to rely on your assertion. If you make a habit if asserting things that are not true, it will ruin your reputation. | By way of example, in the statement “I hypothesize that it’s raining outside”, the hypothesis is the dependent clause, i.e. the clause that says “it’s raining outside.” When you hypothesize something, you are not asserting anything about whether the hypothesis is true. It might be known true, known false, probable, improbable, completely unknown, or whatever. Usually the intention is to determine at some later stage the truth or falsity or probability of the hypothesis. This is analogous to a common situation in ordinary algebra, where we introduce a variable x in hopes that the value of x will be determined at some later stage. |

A hypothesis is not a prediction or even a guess. If I predict that the sun will rise in the east, and I do the experiment, I am implicitly considering two hypotheses: Either it will rise in the east or it won’t. The point is that here we have one prediction but two hypotheses.

Terminology: The word “scenario” is very similar in meaning to the word “hypothesis”.

Terminology: Analyzing a set of hypotheses is sometimes called a “what if” analysis. It may also be called a “case by case” analysis.

There are two main ways of using a hypothesis, namely:

Additional meanings, connotations, and synonyms will be discussed in section 5.

It must be emphasized that a hypothesis is not a guess. Writing down a hypothesis does not express or connote any belief as to whether the hypothesis is true or not. When we offer a hypothesis, we are not even hinting that we want it to be true.

There are plenty of words that express the idea of guess, including guess, conjecture, supposition, expectation, et cetera. We do not need another word to express the same idea. We should certainly not abuse the word hypothesis by putting it on this list. In the context of proof by contradiction, we write down a hypothesis with every expectation that it will be proved false. Again, a hypothesis is just like the algebraic variable x: the point is that we will at some later point calculate the value of x. We do not need to guess it in advance.

Let us define a hypothetical scenario as follows: it consists of a group of logical statements called the body of the scenario, plus another statement called the hypothesis of the scenario. We then establish the rule, as a notational convention, that every for statement s in the body of the scenario, if we see it written in the form “s”, we interpret it as meaning “if H then s”, where H is the hypothesis of the scenario.

That’s really all there is to it.

Remark: This is just a notational convention. Any calculation performed using a hypothetical scenario could equally well be performed without it, simply by replacing each statement “s” in the body of the scenario by the conditional statement “if H then s”. The two versions would be equally rigorous. The only difference is that one would be more verbose.

We can express the idea of hypothetical scenario more formally, as follows. The following statement of Boolean logic is guaranteed to hold for all H, A, B, and C:

| (1) |

Remember the rule: If you want to take a result that was calculated within a scenario (such as C) and use it outside the scenario, you must explicitly make it conditional on the hypothesis (by saying if H then C).

In connection with equation 1, the definition of hypothesis is merely a bit of terminology. We say that in this equation, H plays the role of hypothesis.

Equation 1 is nothing more or less than an application of the distributive law of Boolean logic. It is closely analogous to the distributive law for ordinary arithemetic, which tells us that the following assertion is true for all numbers X, A, B, and C:

| (2) |

Hint: It is super-important to distinguish what’s inside the body of the scenario and what’s outside. Lots of things that are valid inside the body would not be valid outside. This applies most spectacularly to the hypothesis H itself. Inside the scenario, you may freely assert that H is true ... but that does not mean that H is true in any absolute sense. It is only conditionally true. Like everything else inside the scenario, it is conditional on H.

Suppose you want to take H, which is valid inside the scenario, and move it outside the scenario. If you follow the rules, you end up with the statement “if H then H”, which is a tautology. It is always unconditionally true, whether H itself is true or not.

Remark: As defined here, there is absolutely no requirement that the hypothesis be known in advance to be true or not. It might be known true, known false, or (much more likely) simply unknown, or dependent on unspecified details.

Example: A high-school geometry book can be considered one long hypothetical scenario. The hypothesis is a statement of the axioms of Euclidean geometry. Therefore, when the book asserts the Pythagorean formula a2 + b2 = c2, you must not take that to mean that a2 + b2 = c2 always, but rather it means that a2 + b2 = c2 in a Euclidean space!

As it turns out, there are lots of non-Euclidean spaces where the Pythagorean formula is not valid.

Hypothesis testing is important in many aspects of real life. It is not confined to the science lab.

For example, suppose there is something wrong with the brakes on your car. You observe that the pedal is “soft” but you don’t (yet) know the underlying cause. You need to consider all the plausible hypotheses.

If you fail to consider all the plausible hypotheses, you could waste a tremendous amount of time and money replacing and repairing the wrong things.

Here are some more examples of hypotheses:

• The sum of the first N positive integers is N(N+1)/2. • The sum of the first N positive integers is 2N. • Cows eat grass. Table 1: Example Hypotheses (shorthand)

The foregoing hypotheses appear to have the same form as ordinary assertions, i.e. simple declarative sentences. However we know that these are hypotheses, because they have been declared as such by the context, in the sentence before the table and in the caption of the table. Therefore, the statements in table 1 must be recognized as shorthand for the following:

• We hypothesize that the sum of the first N positive integers is N(N+1)/2. • We hypothesize that the sum of the first N positive integers is 2N. • We hypothesize that cows eat grass. Table 2: Example Hypotheses (explicitly hypothetical)

It is important to make sure that everybody knows that a hypothetical statement is hypothetical. Sometimes it is clear from context that a statement is meant to be hypothetical, in which case the shorthand forms in table 1 are adequate. However, it is always safer to spell it out, as in table 2.

As a final clarification, we should rewrite the hypotheses as follows:

• We hypothesize that for all N, the sum of the first N positive integers is N(N+1)/2. • We hypothesize that for all N, the sum of the first N positive integers is 2N. • We hypothesize that every cow in the world eats grass. Table 3: Example Hypotheses (explicit quantifier)

The point here is that we have added the phrase “for all N” or words to that effect. This is an example of the universal quantifier. It explicitly says that the rest of the statement applies to all the positive integers in the universe. This was implicit in previous versions of our example. See section 3.4 for more about quantifiers.

Because of the universal quantifier, the hypothesis that “for all N, the sum of the first N positive integers is N(N+1)/2” is in effect the conjunction of a countably-infinite number of simpler hypotheses, specifically:

• when N=1, the sum of the first N positive integers is N(N+1)/2 ... and ... • when N=2, the sum of the first N positive integers is N(N+1)/2 ... and ... • when N=3, the sum of the first N positive integers is N(N+1)/2 ... and ... • when N=4, the sum of the first N positive integers is N(N+1)/2 ... and ... • et cetera. Table 4: Binding : General to Specific

Another principle of formal logic says we can pull a particular factor out of that big conjunction. For example, we can pull out the N=3 factor, in which case the general hypothesis “for all N, the sum of the first N positive integers is N(N+1)/2” implies the more specific hypothesis “when N=3, the sum of the first N positive integers is N(N+1)/2”.

The next step is to find out more about the truth or falsity of our hypotheses. In some cases (including the algebraic examples in table 3) it is possible to determine the truth or falsity using purely theoretical techniques (in this case algebra). Sometimes, though, we don’t have enough theoretical traction to fully solve the problem, so we must supplement the theoretical analysis with empirical methods. This empirical process is what we call hypothesis testing.

This can be understood in terms of the following table. For each hypothesis H1 through H6 and for each data point D1 through D5, we apply the given hypothesis to the given data point, and ask whether the resulting specific statement is true or false.

| D1 | D2 | D3 | D4 | D5 | |

| H1 | Y | Y | Y | Y | Y |

| H2 | N | N | Y | N | N |

| H3 | Y | Y | N | Y | Y |

| H4 | Y | Y | Y | Y | Y |

| H5 | Y | Y | N | N | N |

| H6 | N | N | N | N | N |

In this example, we see that hypothesis H4 is consistent with all five data points.

Sometimes, especially for binary data, this form of hypothesis testing can be seen as a winnowing process. That is, we start out with a large number of hypotheses, which we initially categorize as “untested”. Then, as the data comes in, we will gradually be able to move some of the hypotheses out of the “untested” category. We move each hypothesis into the “consistent” category or the “inconsistent” category, according to whether or not it turns out to be consistent with all the data seen so far.

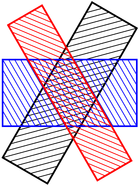

The winnowing process is illustrated in figure 1. The black rectangle represents all the hypotheses that are consistent with the first observation; the red rectangle represents all the hypotheses that are consistent with the second observation, and the blue rectangle represents all the hypotheses that are consistent with the third observation. The middle region, where all three rectangles overlap, represents the hypotheses that are consistent with all three observations.

As a specific example of this, consider the famous “Twelve Coins” puzzle (reference 1). We start with 24 hypotheses: the first coin could be heavy (with all other coins normal), the first coin could be light (with all other coins normal), and so on for the remaining coins. At the beginning of the investigation, before any weighings have been performed, all 24 of these hypotheses are in the set of contenders. After the first weighing, we can rule out most of these, leaving us with a smaller number of contenders, typically 8 contenders. After the second weighing, there are typically 3 contenders, and after the third weighing there should be only a single contender, which we anoint as “the” answer, i.e. “the” correct hypothesis.

Note that when we first construct the set of hypotheses, there is no expectation that every hypothesis is correct; indeed we expect that eventually N−1 of the hypotheses will be shown to be incorrect. At the other extreme, it is pointless (but usually harmless) to include hypotheses that have no chance of being correct. A good guideline is to include all the hypotheses that have an appreciable (i.e. more-than-infinitesimal) probability of turning out to be correct. These are called the plausible hypotheses.

You may find Bongard Problems (reference 2) to be good examples and exercises in hypothesis testing.

Strict binary winnowing is not the whole story.

In some cases, we have binary data, but we have some tolerance for error. That is, for each hypothesis, we keep track of how often it is consistent with a data point and how often it is inconsistent. We do not necessarily exclude a hypothesis the first time an inconsistent data point shows up.

More generally, rather than binary consistent/inconsistent pass/fail grading, there will be analog information about goodness of fit. For each hypothesis, we keep track of some sort of weighted average of how well it fits the data.

It is important to think clearly about quantifiers, because if we change the last statement to read “For some N, the sum of the first N positive integers is 2N” we completely change the meaning. (The phrase “for some N” is called the existential quantifier, because it says there exists some N that makes the statement true.)

A famous and important application of hypotheses goes by the name proof by contradiction, also known as reductio ad absurdum. This is simply a hypothetical scenario, the point of which is to prove that the hypothesis is false.

As an example, let us build a scenario. We choose as our hypothesis H the assertion that √2 is a rational number. Now you may already know that H is false, but the point of the exercise is to prove this to someone who does not already know it. There is a difference between truth and knowledge, as discussed in reference 3.

On the other side of the same coin, it would be nonsense to assume H is true in the absolute sense, because it turns out that H is not true, i.e. √2 is irrational. If you make false assumptions on line 1 of your calculation, all the rest of the calculation is nonsense.

So we will not assume H. Neither will we assume not-H. We will merely hypothesize H. That is, we will begin a hypothetical scenario, such that every statement in the body of the scenario is conditional on H.

After a few lines of work (which will not be shown here), we come to a contradiction. That is, we derive a statement of the form “q is divisible by 2 and q is not divisible by 2”. This is of the form “X and not-X” and can be further simplified to simply “false”, according to the usual rules of Boolean logic.

Now if we want to use this result outside of the scenario, we must make it explicitly conditional on the hypothesis, so we write “if √2 is rational, then false”.

Next, we need to use some basic facts of logic, which tell us that all the following statements are equivalent:

| (3) |

In particular, the statement “not-B ⇒ not-A” is called the contrapositive of the statement “A ⇒ B”.

In our case, the contrapositive of the statement “if √2 is rational, then false” is the statement “if true, then √2 is not rational” ... or more simply, “√2 is irrational”, which is what we wanted to prove.

This is quite a famous result. It is attributed to Hippasus of Metapontum, about 2500 years ago.

There are various names that can be used for the statements governed by a conditional statement. In particular, for the statement “if H then C” we have:

| H could be called | C could be called |

| antecedent | consequent |

| premise | conclusion |

| proviso | |

| condition | |

| hypothesis |

The meaning of “hypothesis” on the last line of the table is entirely consistent with the meaning in section 2, and simply represents the minimal case where there is only a single statement in the body of the scenario. On the other edge of the same sword, the hypothesis of a scenario may equivalently be called the premise of the scenario or the condition of the scenario.

A conjecture is like a hypothesis, perhaps with the additional connotation that you expect it to be true, or you hope it will be true.

A assumption is unlike a hypothesis, because it is assumed to be true. It is fairly common, but sloppy and not recommended, to use the word “assumption” to refer to the hypothesis of a scenario. This is particularly not recommended in the case of proof by contradiction, where the hypothesis is absolutely not an assumption, because it is not true.

Among non-scientists, there is a widespread misconception that hypothesis testing is “the” scientific method. This is quite untrue, and paints a very unhelpful of what science is and what scientists do. In fact scientists use a wide variety of methods, as explained in reference 4.