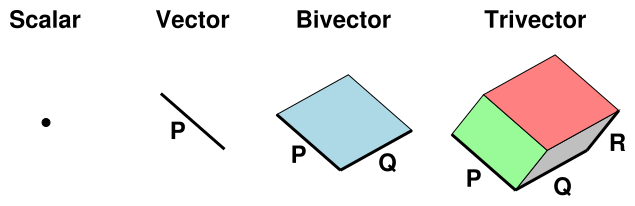

Figure 1: Scalar, Vector, Bivector, and Trivector

We begin by discussing why we should care about Clifford Algebra. (If you want an overview of how Clifford Algebra actually works, skip to section 2.)

| (1) | ||||||||||||||||||||||||||||||||

| (2) |

One advantage of Clifford algebra is that it gives a unified view of rotations in any number of dimensions from two on up, including four-dimensional spacetime. See reference 2. Similarly, it gives a unified view of scalars, vectors, complex numbers, quaternions, and spinors.

| The cross product only makes sense in three dimensions. | The wedge product is well behaved in any number of dimensions, from zero on up. |

| The cross product is defined in terms of a “right hand rule”. | A wedge product is defined without any notion of handedness, without any notion of chirality. This is discussed in more detail in section 2.14. This is more important than it might seem, because it changes how we perceive the apparent symmetry of the laws of physics, as discussed in reference 3. |

| The cross product only applies when multiplying one vector by another. | The wedge product can multiply any combination of scalars, vectors, or higher-grade objects. |

The wedge product of two vectors is antisymmetric, and involves the sine of the angle between two vectors ... and the same can be said of the cross product.

| ∇ F = |

| J (3) |

It is worth learning Clifford algebra just to see this equation. For details, see reference 4.

Also: In their traditional form, the Maxwell equations seem to be not left/right symmetric, because they involve cross products. However, we believe that classical electromagnetism does have a left/right symmetry. By rewriting the laws using geometric products, as in equation 3, it becomes obvious that no right-hand rule is needed. A particularly pronounced example of this is Pierre’s puzzle, as discussed in reference 3.

Similarly: The traditional form of the Maxwell equations is not manifestly invariant with respect to special relativity, because it involves a particular observer’s time and space coordinates. However, we believe the underlying physical laws are relativistically invariant. Rewriting the laws using geometric products makes this invariance manifest, as in equation 3.

As an elegant application of the basic idea that the electromagnetic field is a bivector, reference 5 explains why a field that is purely an electric field in one reference frame must be a combination of electric and magnetic fields when observed in another frame.

As a more mathematical application of equation 3, reference 6 calculates the field surrounding a long straight wire.

The purpose of this section is to provide a simple introduction to Clifford algebra, also known as geometric algebra. I assume that you have at least some prior exposure to the idea of vectors and scalars. (You do not need to know anything about matrices.)

For a discussion of why Clifford algebra is useful, see section 1.

This document is also available in PDF format. You may find this advantageous if your browser has trouble displaying standard HTML math symbols.

In addition to scalars and vectors, we will find it useful to consider more-general objects, including bivectors, trivectors, et cetera. Each of these objects has a clear geometric interpretation, as summarized in figure 1.

That is, a scalar can be visualized as an ideal point in space, which has no geometric extent. A vector can be visualized as line segment, which has length and orientation. A bivector can be visualized as a patch of flat surface, which has area and orientation. Continuing down this road, a trivector can be visualized as a piece of three-dimensional space, which has a volume and an orientation.

Each such object has a grade, according to how many dimensions are involved in its geometric extent. Therefore we say Clifford algebra is a graded algebra. The situation is summarized in the following table.

| object | visualized as | geometric extent | grade | |||

| scalar | point | no geometric extent | 0 | |||

| vector | line segment | extent in 1 direction | 1 | |||

| bivector | patch of surface | extent in 2 directions | 2 | |||

| trivector | piece of space | extent in 3 directions | 3 | |||

| etc. | ||||||

For any vector V you can visualize 2V as being twice as much length, and for any bivector B you can visualize 2B as having twice as much area. Alas this system of geometric visualization breaks down for scalars; geometrically all scalars “look” equally pointlike. Perhaps for a scalar s you can visualize 2s as being twice as hot, or something like that.

For another important visualization idea, see section 2.13.

As mentioned in section 1 and section 6, bivectors make cross products obsolete; any math or physics you could have done using cross products can be done more easily and more logically using a wedge product instead.

The scalars in Clifford algebra are the familiar real numbers. They obey the familiar laws of addition, subtraction, multiplication, et cetera. Addition of scalars is associative and commutative. Multiplication of scalars is associative and commutative, and distributes over addition.

The vectors in Clifford algebra can be added to each other, and can be multiplied by scalars in the usual way. Addition of vectors is associative and commutative. Multiplication by scalars distributes over vector addition. We will introduce multiplication of vectors in section 2.5.

Presumably you are familiar with the idea of adding scalars to scalars, and adding vectors to vectors (tip to tail). We now introduce the idea that any element of the Clifford algebra can be added to any other. This includes adding scalars to vectors, adding vectors to bivectors, and every other combination. So it would not be unusual to find an element C such that:

| C = s + V + B (4) |

where s is a scalar, V is a vector, and B is a bivector.

This clearly sets Clifford algebra apart from ordinary algebra.

Remark: Sometimes non-experts find this disturbing. Adding scalars to vectors is like adding apples to oranges. Well, so be it: people add apples to oranges all the time; it’s called fruit salad. In contrast, it is proverbially unwise to compare apples to oranges, and indeed we will not be comparing scalars to vectors. Addition (s + V) is allowed; comparison (s < V) is not.

Terminology: The most general element of the Clifford algebra we will call a clif. In the literature, the same concept is called a multivector, but we avoid that term because it is misleading, for reasons discussed near the end of section 2.9.

Presumably you already know how to add vectors graphically. In figure 2, vector b adds tip-to-tail to vector x to form c.

In figure 3, the same thing happens, plus we have to worry about what happens at the hinge where the red and green bivectors meet. That’s simple: the red edge in the −a direction cancels the green edge in the a direction, since they are equal and opposite. So when we add a∧b to a∧x we get a∧c, which makes sense, since everything we are doing is linear.

Note: The graphical addition is necessarily hinged at equal-and-opposite edges. If you are ever tempted to join two edges in the same direction, you ought to do a subtraction. Negate one of the bivectors, then add.

As a physics example, consider addition of angular momenta, namely a gyroscopic precession problem. The red bivector is the initial angular momentum of the system, and the small green bivector is additional angular momentum, obtained by applying a torque for some time. (The formulas for torque and angular momentum are given in section 3.) The blue bivector is the new angular momentum, which has a new orientation due to precession. Note that a torque in the a∧x direction causes the angular momentum to rotate in the b∧x direction, which is this example is nearly perpendicular to the torque. This is remarkably different from ordinary straight-line momentum, which changes in the same direction as you push it.

This has important real-world consequences for things like helicopters, which have a tremendous amount of angular momentum. Also, in the Blériot, Sopwith Camel, and many other WWI aircraft, the crankshaft was bolted to the fuselage, and all the rest of the engine rotated along with the propeller. The angular momentum took a bit of getting used to.

Given any clif C, we can talk about the grade-0 piece of it, the grade-1 piece of it, et cetera.

Notation: The grade-N piece of C is denoted ⟨C⟩N.

We will often be particularly interested in the scalar piece, ⟨C⟩0.

Note: If you are familiar with complex numbers, you can understand the ⟨⋯⟩ operator as analogous to the ℜ() and ℑ() operators that select the real and imaginary parts. However, there is one difference: You might think that the imaginary part of a complex number would be imaginary (or zero), but that’s not how it is defined. According to long-established convention, ℑ(z) is a real number, for any complex z. Clifford algebra is more logical: The vector part of any clif is a vector (or zero), the bivector part of any clif is a bivector (or zero), et cetera. See reference 1 for more on this.

Axiom: We postulate that there is a geometric product operator that can be used to multiply any element of the Clifford algebra by any other.

Notation: The geometric product of A and B is written AB. That is, we simply juxtapose the multiplicands, without using any operator symbol.

As we shall see in section 2.6, the geometric product AB is distinct from the dot product A·B and also from the wedge product A∧B.

We further postulate that the geometric product is associative and distributes over addition:

| (5) |

where A, B, and C are any clifs.

Multiplication is not commutative in general, as we shall see in section 2.6 and elsewhere, but we remark that the special case of multiplying by scalars is commutative:

We have already asserted that any clif can be multiplied by any other clif. Multiplying a vector by a vector is a particularly interesting case. At this point Clifford algebra makes a dramatic departure from ordinary vector algebra.

Given two vectors P and Q, we know that the geometric product PQ exists, but (so far) that’s about all we know. However, based on this mere existence, plus what we already know about addition and subtraction, we can define two new products, namely the dot product P·Q and the wedge product P∧Q, as follows:

Equation 6a is a very useful formula, but you should not become overly attached to it, because it only applies to grade=1 vectors. It doesn’t work for higher or lower grades. See section 2.10 for a more general formula.

Equation 6b is only slightly more general. It works for any combination of vectors and/or scalars (grade≤1). See section 2.11 for some more general formulas.

As an immediate corollary of equation 6, we can re-express the geometric product of two vectors as:

| (7) |

This useful formula only works when both P and Q are vectors. I keep mentioning this, because some authors take equation 7 to be the “definition” of geometric product (based on some sort of pre-existing notion of dot and wedge). They can get away with that for the product of two plain old vectors, but it fails for higher (or lower) grades, and creates lots of confusion.

As another corollary of equation 6, we see that the dot product of two vectors is symmetric, while the wedge product of two vectors is antisymmetric:

Terminology: The wedge product is sometimes called the exterior product. (This is not to be confused with the tensor product ⊗, which is sometimes called the outer product.)

In this document we prefer to call it the wedge product. We shall not be concerned with derivatives of any kind, exterior or otherwise.

Beware: Reference 8 introduces the term “antisymmetric outer product” to refer to the wedge product, and then shorthands it as “outer product”, which is highly ambiguous and nonstandard. It conflicts with the longstanding notion of outer product, as discussed in section 7.

Let’s investigate the properties of the dot product. We restrict attention to ordinary grade=1 vectors. We will show that the dot product defined here behaves just like the dot product you recall from ordinary vector algebra. For starters, we are going to argue that P·P behaves like a scalar.

One characteristic behavior of a scalar (in the geometric sense) is that if you rotate it, nothing happens. This is very unlike a vector, which changes if you rotate it (unless the plane of rotation is perpendicular to the vector).

As an introductory special case, consider rotating P by 180 degrees, assuming P lies in the plane of rotation. That’s easy to do: a 180 degree rotation transforms P into −P. We are pleased to see that this transformation leaves P·P unchanged. This is easy to prove, using the definition (equation 6) and using the fact that multiplication by scalars is commutative: Just factor out two factors of -1 from the product (−P)·(−P).

It’s also obvious that a rotation in a plane perpendicular to P leaves P·P unchanged, which is reassuring, although it doesn’t help distinguish scalars from anything else.

Tangential remark: More generally, we assert without proof that P·P is in fact invariant under any rotation (i.e. any amount of rotation in any plane). We are not ready to prove this, since we haven’t yet formally defined what we mean by rotation ... but we will pretty much insist that rotation leave P·P invariant, because we want P·P to be a scalar, and we want √(P·P) to be the length of P, and we want rotations to be length-preserving transformations. (This isn’t a proof, but it is an argument for plausibility and self-consistency.)

If P·P is a scalar, it is easy to show that P·Q is a scalar, for any vectors P and Q. Just define R := P + Q and then take the dot product of each side with itself:

| R·R = P·P + 2 P·Q + Q·Q (9) |

where every term except 2 P·Q is manifestly a scalar, so the remaining term must be a scalar as well.

This leaves us pretty much convinced that the dot product between any two vectors is a scalar. It can’t be a vector or anything else we know about. To get here, we didn’t do much more than postulate the existence of the geometric product, and then do a bunch of arithmetic.

Terminology: If vector Q is equal to P, or is equal to P multiplied by any nonzero scalar, we say that P and Q are parallel and we symbolize this as P ∥ Q.

Based on what we already know (mainly the symmetry properties, equation 8) we can deduce that if P and Q are parallel, then P∧Q = 0 and PQ = P·Q; that is:

| PQ = QP = P·Q iff P ∥ Q (10) |

which gives us a useful test for detecting parallel vectors.

Terminology: If P·Q = 0, we say that vectors P and Q are perpendicular or equivalently orthogonal and we symbolize this as P ⊥ Q.

If vectors P and Q are orthogonal, then P·Q = 0 and PQ = P∧Q; that is:

| PQ = −QP = P∧Q iff P ⊥ Q (11) |

In general, in the case where P and Q are not necessarily parallel or perpendicular, the geometric product will have two terms, in accordance with equation 7.

Lemma: We can resolve any vector P into a component PQ which is parallel to vector Q, plus another component (P−PQ) which is perpendicular to Q. Proof by construction:

| PQ := Q |

| (12) |

This lemma is conceptually valuable, and frequently useful in practice. (See e.g. section 2.20.)

PQ is called the projection of P onto the direction of Q, or the projection of P in the Q-direction.

It is an easy exercise to show the following:

| (13) |

Now, let’s investigate the properties of the wedge product of two vectors. We anticipate that it will be a bivector (or zero). We use the same line of reasoning as in section 2.7. We begin by considering the case where P∧Q is nonzero.

You can easily show that the wedge product P∧Q is invariant with respect to 180 degree rotation in the PQ plane. That is, just replace P by −P and Q by −Q and observe that nothing happens to the wedge product. This tells us the product is not a vector in the PQ plane. We remark without proof that this result is invariant under any rotation (however small or large) in the PQ plane.

Things get more interesting if we have more than two dimensions, because that allows us to investigate additional planes of rotation.

To make things easy to visualize, let us replace Q by Q′, where Q′ is the projection of Q in the directions perpendicular to P. We can always do this, using the methods discussed in section 2.8. According to equation 13, we know P∧Q′ is equal to P∧Q.

Choose any vector R perpendicular to both P and Q′. Rotate both vectors in the PR plane by 180 degrees. This transforms P into −P, but leaves Q′ unchanged (since it is perpendicular to the plane of rotation). That means the rotation flips sign of the wedge product, P∧Q.

Similarly a rotation in the QR plane flips the sign of the wedge product. As a final check, we perform an inversion, i.e. the operation that transforms any vector V into −V, not limited to any plane of rotation. This leaves the wedge product unchanged.

Taking all these observations together, we find that P∧Q behaves exactly as we would expect a bivector to behave, based on the description given in section 2.1: a patch of surface with a direction of circulation around its edge. That is: the area is unchanged if we rotate things in the plane of the surface, but if we rotate things 180 degrees in a plane perpendicular to the surface, the surface flips over, reversing the sense of circulation.

The idea of wedge product generalizes to more than two vectors. For example, with three vectors, we generalize equation 6 as follows:

| P∧Q∧R := |

| (PQR + QRP + RPQ − RQP − QPR − PRQ) (14) |

You can skip the following equation if you’re not interested, but if you want the fully general expression, it is:

| q1∧q2∧q3⋯qr := |

|

| sign(π) qπ(1) qπ(2) qπ(3)⋯ qπ(r) (15) |

where the sum runs over all possible permutations π. There are r! such permutations, and sign(π) is defined to be +1 for even permutations and −1 for odd permutations. This will be an object of grade r if all the vectors q1⋯qr are linearly independent; otherwise it will be zero.

People like to say “the wedge product is antisymmetric” ... but you have to be careful. It is antisymmetric with respect to interchange of any two vectors ... not any two clifs. For example:

| (16) |

for any scalar s and any clif C.

Terminology: A blade is defined to be any scalar, any vector, or the wedge product of any number of vectors.

Terminology: Any clif that has a definite grade is called homogeneous. It is necessarily either a blade or the sum of blades, all of the same grade.

Example: The sum s + V (where s is a scalar and V is a vector) is not homogenous. It does not have any definite grade. It is certainly not a blade.

Example: In four dimensions, the quantity γ0 γ1 + γ2 γ3 is homogeneous but is not a blade. It has grade=2, but cannot be written as just the wedge product between two vectors.

Terminology: As previously mentioned, we use the term clif to cover the most general element of the Clifford algebra. In the literature, the same concept is called a multivector, but we avoid that term because it is misleading. The problem may be due in part to the etymology suggested by the sequence:

| vector, bivector, trivector, ⋯, multivector (WRONG) (17) |

in contrast to the correct sequence:

| vector, bivector, trivector, ⋯, blade (RIGHT) (18) |

Terminology: Do not confuse a trivector with a 3-vector. A trivector is visualized as the 3-dimensional region spanned by three vectors. This region may be embedded in a space that is 3-dimensional or higher. In contrast, a 3-vector is a single vector that lives in a space with exactly 3 dimensions.

Terminology: To describe the grade of a blade, the recommended approach is to mention the grade explicitly. For example, we say a bivector has grade=2, while a trivector has grade=3, and so on.

There is another, non-recommended approach, in which a bivector is called a 2-blade, while a trivector is called a 3-blade, and so on. This is risky because of possible confusion as to whether the number refers to grade or dimension. Note that a 3-blade has grade=3, while a 3-vector has dimension=3, so confusion is to be expected.

We have already defined the wedge product of arbitrarily many vectors, according to equation 15.

We now define the wedge product between any blade and any blade. The rule is simple: unpack each blade as a wedge product, remove the parentheses, and apply equation 15:

| (19) |

where the RHS is defined by equation 15. As an obvious corollary of this definition, the wedge product has the associative property.

It is easy to see that for any two blades P and Q, which are of grade p and q respectively, the grade of P∧Q will be p+q (unless the product happens to be zero, in which case its grade is zero).

We can use that idea in the other direction, as follows: As discussed in section 2.16, the full geometric product PQ is liable to contain terms of all grades from |p−q| to p+q inclusive (counting by twos). The wedge product consists of just those terms with the highest possible grade. In symbols:

| (20) |

Given this definition of blade wedge blade, we can generalize to any clif wedge clif, simply by saying the wedge product distributes over addition:

| V∧(A + B) = V∧A + V∧B (21) |

We hereby define the dot product of two blades to be the lowest-grade part of the geometric product. That is, if P has grade p and Q has grade q, then the dot product will have grade |p−q|. In symbols:

| (22) |

Just as the wedge product was the top-grade part of the geometric product, the dot product is the bottom-grade part.

Let’s be clear: The definition of dot product depends more on its grade than on its symmetry. The dot product of two vectors is symmetric, while the dot product of a vector with a bivector is antisymmetric:

| (23) |

You can check that this more-general definition of dot product is consistent with what we said back in section 2.6 about the dot product of vectors.

Given this definition of blade dot blade, we can generalize to any clif dot clif, simply by saying that the dot product distributes over addition. That is,

| V·(A + B) = V·A + V·B (24) |

Suppose P, Q, and R are grade=1 vectors. Then P ∧ (Q·R) is simple. It’s just a vector parallel to P, magnified by the scalar quantity (Q · R).

In contrast, (P ∧ Q) · R is more interesting. Here are some important special cases:

| (25) |

where x, y, and z are a set of orthonormal vectors, perhaps basis vectors. You can verify these results using equation 26. Other cases can be figured out using linear combinations of the above.

We can summarize the situation as follows: We can consider (P ∧ Q) · R to be an operator acting on the vector R. It is partly a projection operator, partly a rotation operator, and partly a scale factor. That is to say:

Those three operations can be performed in any order.

You can handle the general case by expanding everything in terms of the full geometric product, using the definition of wedge (equation 6b or equation 20) and the definition of dot (equation 22):

| (26) |

The dot product between a vector and a bivector is antisymmetric:

| (27) |

Beware: Equation 27 may come as a surprise, since the dot product between two vectors would be symmetric.

Also beware: A combination of wedge and dot does not exhibit the associative property:

| (28) |

Further beware: Wedge does not distribute over dot, nor vice versa:

| (29) |

We really shouldn’t expect it to. We expect products to distribute over sums, but both wedge and dot are products (not sums).

There is a very interesting way to visualize the wedge product. Consider the product C∧V, where C is a clif of any grade and V is a vector. The idea is to use C as a paintbrush, dragging C along V. The dragging motion is specified by the direction and magnitude of V. We keep C parallel to itself during the process. For instance, in figure 1 or figure 5, we form the parallelogram P∧Q by dragging the vector P along Q. Similarly in figure 1 we form the parallelepiped P∧Q∧R by dragging the parallelogram P∧Q along R.

The paintbrush picture is a little dodgy in the case where C is a scalar, but we can repair it by rewriting s∧V as 1∧(sV), for any scalar s. That is, we take the scalar 1 (which is pointlike) and drag it for a distance |sV| in the V direction. It just paints a copy of sV.

The orientation of the bivector P∧Q can be thought of as a “direction of circulation” marked on the parallelogram, namely moving in the P direction then moving in the Q direction. In figure 5, Q∧P = − P∧Q because they have the opposite direction of circulation. (They have the same magnitude, just opposite orientation.)

Chirality is a fancy word for handedness. It describes a situation where we can define the difference between right-handed and left-handed. The foundations of Clifford algebra do not require any notion of handedness. This is important, because many of the fundamental laws of physics are invariant with respect to reflection, and Clifford algebra allows us to write these laws in a way that makes manifest this invariance.

The exception is the Hodge dual, which requires a notion of handedness, as discussed in section 5. This can be considered an optional feature, added onto the basic Clifford algebra package.

The following three concepts are all optional, and are all equivalent: (1) A notion of “front” versus “back” side of the bivector; (2) a notion of chirality such as the “right-hand rule”; and (3) a notion of “clockwise” circulation.

We emphasize that for most purposes, we do not need to define any of these three concepts. We are just saying that if you did define them, they would all be equivalent. Except in section 5, we are not going to rely on any notion of clockwise or right-handedness or front-versus-back. Instead, we rely on orientation as specified by circulation around the edge of the bivector, which is completely geometrical and completely non-chiral.

We make a point of keeping things non-chiral, to the extent possible, because it tells us something about the symmetry of the fundamental laws of physics, as discussed in reference 3.

Here’s a convenient and easy-to-understand way of computing the full geometric product: Pick a basis. Project the factors onto the basis. Multiply everything term by term. Then simplify.

The multiplication itself follows directly from the distributive law, and the simplification follows directly from the fact that the basis vectors are orthonormal and they anticommute.

For example, suppose we have two vectors A = γ1 + 2γ1γ2 and B = 3γ3 + 5γ1γ2γ3.

| γ1 | 2γ1γ2 | ||||

| ________________________ | |||||

| 3γ3 | 3γ3γ1 | 6γ3γ1γ2 | |||

| 5γ1γ2γ3 | 5γ1γ2γ3γ1 | 10γ1γ2γ3γ1γ2 | |||

To finish the job, we simplify the results as follows: Sort the gamma factors, keeping track of the parity of the permutation. That is, count the number of pairwise swaps (N) you need to do. Each swap picks up a factor of −1, so the overall parity is (−1)N.

(Obviously you don’t swap a basis vector with its twin, and if you did it wouldn’t contribute to the parity.)

The result of the sort is:

| γ1 | 2γ1γ2 | ||||

| ________________________ | |||||

| 3γ3 | 3γ3γ1 | 6γ1γ2γ3 | |||

| 5γ1γ2γ3 | 5γ1γ1γ2γ3 | −10γ1γ1γ2γ2γ3 | |||

Which reduces to:

| γ1 | 2γ1γ2 | ||||

| ________________________ | |||||

| 3γ3 | 3γ3γ1 | 6γ1γ2γ3 | |||

| 5γ1γ2γ3 | 5γ2γ3 | −10γ3 | |||

In general, if you multiply an object of grade r by an object of grade s, the geometric product is liable to contain terms of all grades from |r−s| to |r+s|, counting by twos, as we see in the following:

Example: Let {γ1, γ2, γ3, γ4} be a set of orthonormal spacelike vectors, as discussed in section 2.20, and define:

| (30) |

Both A and B are homogeneous of grade 2. Indeed they are 2-blades. It is easy to calculate the geometric product:

| (31) |

and therefore

For high-grade clifs A and B, the geometric product AB generally leaves us with a lot of terms. As discussed in section 2.11 the bottom-grade term is the dot product (e.g. equation 32a). Meanwhile, as discussed in section 2.10, the top-grade term is the wedge product (e.g. equation 32c). Alas, there are no special names for the other terms, such as the middle term in the previous example (e.g. equation 32b).

If we temporarily restrict attention to plain old vectors (grade=1 only), we reach the remarkable conclusion that the geometric product of two vectors has only two terms, a scalar term and a bivector term (assuming the bivector term is nonzero):

| (33) |

which we can compare and contrast with equation 6, which we reproduce here:

| (34) |

still assuming V and W are vectors.

Sometimes one sees introductory discussions that start by presenting the properties of the dot product and wedge product, and then “define” the geometric product as

| (35) |

which turns the discussion on its head relative to what we have done here. We started by postulating the existence of the geometric product, and then used it to work out the properties of dot and wedge.

They can get away with equation 35 when talking about vectors, but it doesn’t generalize well to objects of any grade higher or lower than 1, and it gets students started off on the wrong foot conceptually. Here is a simple counterexample:

| (36) |

where s is any scalar and C is any clif. Here is another important example:

| (37) |

In ordinary Euclidean space, whenever you compute the dot product of a vector with itself, the result is positive; that is, S·S > 0. In general, whenever this dot product is positive, we say that the vector S is spacelike.

In special relativity, i.e. in Minkowski space, we find that some vectors have the property that T·T < 0. In that case, we say that the vector T is timelike.

In a space where spacelike and timelike vectors exist, there will be other vectors with the property that N·N = 0. We say that such a vector N is null or equivalently lightlike.

For grade=1 vectors, I define the gorm of a vector to be dot the product of a vector with itself. The gorm is bilinear; that is to say:

| (38) |

This stands in contrast to the norm which is linear: |2S| = 2|S|. For a spacelike vector, the norm is √(S·S), and corresponds to the notion of proper length. Meanwhile, for a timelike vector, the norm is √(−T·T), which corresponds to the notion of proper time interval. For a null vector, the norm is zero, so we don’t need to worry about whether to use a + or − sign inside the square root.

To generalize the concept of gorm to higher-grade objects, see section 2.19.

We define the reverse of a clif as follows: Express the clif as a sum of products, and within each term, reverse the order of the factors. For example, the reverse of (P + Q∧R) is (P + R∧Q), where P, Q, and R are vectors (or perhaps scalars).

Notation: For any clif C, the reverse of C is denoted C∼

Reverse has no effect on individual scalars or vectors, but is important for bivectors and higher-grade objects. Reverse plays an important role in the description of rotations, as discussed in reference 2. It also appears in the general definition of gorm, as discussed in section 2.19

Note: If you are familiar with complex numbers, you can understand the reverse as a generalization of the notion of complex conjugate. See reference 1 for details.

In all generality, the gorm of any clif C is formed by multiplying C by the reverse of C, and keeping the scalar part of the product. That is:

| gorm(C) := ⟨C∼ C⟩0 (39) |

For example, if C = a + b γ1 + c γ2 + d γ1 γ2, then the gorm of C is a2 + b2 + c2 + d2 ... assuming γ1 and γ2 are spacelike basis vectors, as discussed in section 2.20. You can almost consider this a generalization of the Pythagorean formula, where in a non-abstract sense γ1 is perpendicular to γ2, and in some much more abstract sense the terms of each grade are “orthogonal” to the terms of every other grade.

In any Euclidean space, the gorm of any nonzero blade is a positive number. However, in Minkowski spacetime (i.e. special relativity) the gorm of a timelike blade is negative. Also the gorm of a lightlike (aka null) blade is zero, even though the blade itself is nonzero.Spacetime is as simialar to ordinary Euclidean space as it possibly could be, without being completely identical. There is a minus sign that shows up in one place in the metric, and that has far-reaching ramifications.

Given any set of d linearly-independent non-null vectors, we can create an orthonormal basis set, i.e. a set of d mutually-orthogonal unit vectors. Actually we can create arbitrarily many such sets.

Proof by construction: Use the Gram-Schmidt renormalization algorithm. That is, use formulas like equation 12 to project out mutually-orthogonal components. Then divide by the norm to normalize them.

In Minkowski spacetime, any such basis will have the following properties:

| γ0 γ0 = −1 (40) |

| γ1 γ1 = γ2 γ2 = γ3 γ3 = +1 (41) |

and

| γi γj = − γj γi for all i ≠ j (42) |

In this basis set, γ0 is the timelike unit vector, while γ1, γ2, and γ3 are the spacelike unit vectors.

Remark: The minus sign in equation 40 stands in contrast to the plus sign in equation 41. This one difference in signs is essentially the only thing that sets special relativity apart from ordinary Euclidean geometry. This point is discussed more fully in reference 9.

In ordinary Euclidean space, the story is the same, except there are no timelike vectors, so you simply forget about γ0 and equation 40.

Given a basis, we can write any arbitrary vector V as a linear combination of the basis vectors:

| V = a γ0 + b γ1 + c γ2 + d γ3 (43) |

for suitable scalars a, b, c, and d.

Terminology: These scalars (a, b, c, and d) are sometimes called the components of V in the chosen basis. They can also be called the matrix elements of V in the chosen basis.

Terminology: The vector a γ0 is sometimes called the component of V in the chosen γ0 direction (and similarly for the other terms on the RHS of equation 43). Such a vector can also be called the projection of V onto the chosen directions.

It is usually obvious from context which definition of “component” is intended. If you want to avoid ambiguity in your writing, you can avoid the word “component” and instead say “matrix element” or “projection” as appropriate.

We can also write the expansion of V as

| V = V0 γ0 + V1 γ1 + V2 γ2 + V3 γ3 (44) |

where again the Vi are called the components (or matrix elements) of V in the chosen basis.

Beware: Even though Vi is a component of the vector V and is a scalar, please do not think of γi in the same way. Each γi is a vector unto itself, not a scalar. The i in γi tells which vector, whereas the i in Vi tells which component of the vector. There is no advantage in imagining some super-vector that has the γi vectors as its components.

Given two vectors P and Q, the dot product can be expressed in terms of their components as follows:

| P·Q = −P0 Q0 + P1 Q1 + P2 Q2 + P3 Q3 (45) |

assuming timelike γ0 and spacelike γ1, γ2, and γ3.

Note that this is not the definition of dot product; it is merely a consequence of the earlier definition of dot product (equation 7) and the definition of components (equation 45).

In particular: Non-experts sometimes think the dot product is “defined” by adding up the product of corresponding components, but that is not true in general, as you can see from the minus sign in front of the first term in equation 45. The bogus definition is reinforced by software library routines that compute a so-called “dot product” by blithely multiplying corresponding elements. (If all the basis vectors are spacelike, then you can get away with just multiplying the corresponding components, but keep in mind that that’s not the definition, and not the general rule.)

Useful tutorials on various aspects of Clifford algebra and its application to physics include reference 10, reference 11, and reference 8.

The number of components required to describe a clif depends on the number of dimensions involved. The first few cases are shown in this table:

| (46) |

where s means scalar, v means vector, b means bivector, t means trivector, and q means quadvector. You can see that it takes the form of Pascal’s triangle. On each row, the total number of components is 2D.

When we speak of “the” dimension, it refers to the dimensionality of whatever space you are actually using. This includes the case where, for whatever reason, attention is restricted to some subspace of the natural universe. For example, if your universe contains three dimensions, but you are only considering a single plane of rotation, then the D=2 description applies. Similarly, if you live in four-dimensional spacetime, but are only considering rotations in the three spacelike directions, then the D=3 description applies.

To say the same thing another way, Clifford algebra is remarkably agnostic about the existence (or non-existence) of dimensions beyond the ones you are actually using. (This makes the geometric product much more elegant than the old-fashioned vector cross product, which requires you to think about a third dimension, even if you only started out with two dimensions.)

If you find that you need D dimensions to describe the laws of physics, that sets a lower bound on the dimensionality of the universe you live in. It is not an upper bound, i.e. it provides not the slightest evidence against the existence of additional, unseen dimensions, such as arise in string theory.

This section doesn’t explain the physics. Sorry. The purpose here is just to collect some useful formulas. In particular, if you ever forget the sign conventions, this may serve as a reminder.

Torque is:

| (47) |

The angular momentum of a pointlike object is:

| (48) |

where r is the object’s position and p is its momentum (i.e. its ordinary linear momentum).

The fundamental laws of physics say that both linear momentum and angular momentum are conserved.

The object’s angular velocity is given by

| (49) |

where v is the velocity.

It is often useful to find the linear velocity (v) in terms of the angular velocity (ω) and the position:

| (50) |

as you can verify by plugging the definition of ω (from equation 49) into the RHS of equation 50. You will also need the definition of wedge product, i.e. equation 6. Beware the minus sign in the last line of equation 50; multiplying a vector times a bivector is not commutative.

This section should be skipped on first reading. For most purposes, the dot product – as defined by equation 22 or equation 51d – is the only type of dot product you need to know. On the other hand, there are occasionally situations where one of the other products defined in equation 51 allow something to be expressed more simply; see section 5.1 for an example.

By way of background, recall the definition of the product P := A·B from elementary vector algebra. This is known as the dot product, inner product, or scalar product. The elementary definition applies only to the case where A and B are ordinary grade=1 vectors. In this case, the product has several interesting properties:

We wish to generalize this notion of product, so that it applies to arbitrary clifs. There are several ways of doing this, depending on which properties we are most interested in preserving.

|

Loosely speaking, these all try to capture the idea that the contraction is the lowest-grade piece of the geometric product. The Hestenes inner product is almost never a good idea; we mention it here just so you don’t get confused if you see it in the literature. Beware that people who like the Hestenes inner product typically write it with a simple •, so it can easily be confused with the more conventional dot product. Similarly, people who like the scalar product typically write it with a simple •, so now we have three different products represented by the same symbol. In cases where it is necessary to distinguish the conventional dot product (as defined in equation 22 or equation 51d) from other things, it can be called the “fat” dot product. For details, see reference 12.

Mnemonic: In equation 51a and equation 51b, the vertical riser on the operator symbol ( ⌞ or ⌟ ) is on the “big” side. That is, in the expression A ⌞ B, the left side is the big side; this expression is zero unless A is grade-wise bigger than B.

Mnemonic: I refer to A ⌞ B as the “forward” contraction because the grade is computed by subtraction in the expected order: (grade of A) minus (grade of B). In the literature, the conventional English name for this is “right” contraction. The mnemonic is, the “right” way around. This is a pun on right (the opposite of left) and right (the opposite of backwards).

Non-mnemonic: Beware that the symbol ⌞ resembles the letter “L” but the English name for this is “right contraction” (not “left contraction”). Do not think of it as a letter.

If A and B are not homogeneous, we extend these definitions by using the fact that each of these products distributes over addition. That gives us the following formulas:

|

The Hodge dual is a linear operator. In d dimensions, it maps a blade of grade g into a blade of d−g and vice versa. I call this the the grade-flipping property. Two particularly-common cases are illustrated in figure 6 and figure 7.

As a first example, the Hodge dual always converts an omnivector into the corresponding pseudoscalar. In particular d=3, it converts 3ijk into a pseudoscalar 1. As another example, in d=3, the Hodge dual converts a bivector to the corresponding pseudovector. Meanwhile, in d=4, it converts a trivector into the corresponding pseudovector.

Example: In d=3, the familiar “triple scalar product” a·b×c produces a pseudoscalar. It’s called pseudo because if you look at it in a mirror, it changes sign. This stands in contrast to an ordinary non-pseudo scalar, such as the number of beans, which is unaffected by a reflection. Questions of symmetry like this are of the utmost importance in physics.

Terminology: Another name for pseudovector is axial vector. The familiar cross product in d=3 produces a pseudovector aka axial vector. For more on this, see section 5.3.

Axial vectors (which are badly behaved under reflection) stand in contarst to plain old polar vectors (which are well behaved). (I have no idea where the name “polar” comes from.)

More terminology: There is a one-to-one correspondence between omnivectors and pseudoscalars, but they are not the same thing. Similarly, there is a one-to-one correspondence between penomnivectors and pseudovectors, but they are not the same thing. Seriously: Grade=d is not the same as grade=0. Grade=d−1 is not the same as grade=1. I mention this because in the literature there is a lamentable tendency to apply the term “pseuscalar” to the top-grade objects, when they really should be called omnivectors.

Idea #1: Increasing grade: Let V be any vector (grade=1) and let C be an arbitrary blade (grade=g). Then the wedge product V∧C will have grade=g+1, unless the product vanishes. We can summarize this by saying that wedge-multiplying by a vector raises the grade by 1, roughly speaking.

Idea #2: Decreasing grade: Let V be any vector (grade=1) as before, and let D be an arbitrary blade that is not a scalar (grade=g, where g>0). Then the dot product V·D will have grade=g−1, unless the product vanishes. We can summarize this by saying that dot-multiplying by a vector lowers the grade by 1, roughly speaking.

Optional tangential remark: We can simplify the previous paragraph by using the left-contraction operator defined in section 4. Let V be any vector (grade=1) as before, and let C be an arbitrary blade (grade=g), scalar or otherwise. Then the left-contraction V ⌟ C will have grade=g−1, unless the product vanishes. We can summarize this by saying that left-contracting with a vector lowers the grade by 1, roughly speaking.

Combining ideas #1 and #2, we can say that wedge-multiplying is in some sense the grade-flipped version of dot-multiplying. That is, we should be able to define a correspondence, where wedge-multiplying moves us downward in the left column of figure 7, while dot-multiplying moves us upward in the right column. In fact, we can use this idea to define the Hodge dual. (Note that figure 6 and figure 7 do not fully define the Hodge dual; they merely describe some of its features.)

Start with a blade B with grade=k. Let X denote its Hodge dual, i.e. X := B§. We are not yet able to calculate X, but we know that whatever it is, it must have grade d−k. We now find some other blade A with the same grade as the original B, namely grade=k. The wedge product A∧X will be a omnivector (assuming it does not vanish). That is to say, it will have the largest possible grade, namely grade=d.

Meanwhile, we can also form the dot product, A·B. This will have the smallest possible grade, namely grade=0.

Finally, we choose some unit omniscalar, which we denote I. If we have an ordered set of basis vectors, the obvious choice is to multiply all of them together in order, so that I = γ1γ2⋯γd. (Note that by assigning an order to the basis vectors, we introduce a notion of chirality. This is significant addition, insofar as the foundations of Clifford algebra do not require chirality, as discussed in section 2.14.)

At this point we have all the tools needed to define the Hodge dual.

where B§I denotes the Hodge dual of B with respect to i. You can see the grade-flipping property at work here.

Under mild conditions, there is always one and only one X that satisfies equation 53a, so the Hodge dual exists and is unique. We require that in some basis, every basis vector that appears in B must also appear in i.

Note that grade-flipping does not change the cardinality of the basis. For example, there is always one basis scalar and one basis omnivector. In d dimensions there are d basis vectors with grade=1 and d basis multivectors with grade=d−1, which you could call penomnivectors (“almost omnivectors”).

To repeat, the key property is, for any given B:

| (54) |

Fundamentally, the Hodge dual is a binary operator, which is to say that B§I depends on both B and i. However, in a fairly wide range of practical applications, there is an obvious choice for i, which is then called the “preferred” omnivector. In is therefore conventional to simplify the notation by writing the dual of B as *B, where this “*” is a unary prefix operator. This is conventional, but it is not entirely wise. That’s because sometimes we want to think about physics in four-dimensional Minkowski spacetime, and sometimes we want restrict attention to three-dimensional Euclidean space. The Hodge dual is very different in these two cases, because the relevant omnivector is different.

Example: Suppose B=7I (i.e. an omnivector). Then B§I=7 (i.e. a pseudoscalar). This is true in any number of dimensions, from d=1 on up.

Example: In d=3, suppose B = 5γ1 γ2 (i.e. a bivector in the xy plane). Then B§I = 5γ3 = [0, 0, 5] (i.e. a pseudovector in the z direction), badly behaved under reflection. . This assumes I = γ1γ2γ3.

Remark: equation 53 provides an implicit definition. There exist explicit cut-and-dried algorithms for calculating the Hodge dual of B, especially if B is known in terms of components in some basis. See the discussion in reference 13, or see the actual code in reference 14.

If you’re not using the Hodge dual, Clifford algebra has the following remarkable property, which I find starkly beautiful: Suppose you are working with three basis vectors, γ1, γ2, and γa. You know that your space must have at least three dimensions, but you have no way of knowing whether that is merely a subspace of some much larger space. That is, there could be other basis vectors that you do not know about. The remarkable thing is, you do not need to know about them. That’s because Clifford algebra is closed under the usual operations (dot product, wedge product, addition, et cetera). Furthermore, you do not need to arrange your basis vectors in order. You do not need to know whether γa comes before the others, or after, or in between. You do not have a “right-hand rule” and you do not need one.

I call this property “subspace freedom”.

In contrast, if you write the Hodge dual in the form *B, it does not permit subspace freedom. That’s because *B implicitly depends on some specific, chosen, “preferred” unit omnivector. According to the usual way of thinking about *B, the grade of the omnivector tells you exactly how many basis vectors there are. Furthermore, the choice of omnivector defines a notion of right-handed versus left-handed, because if you perform an odd permutation of the basis vectors, the sign of the “preferred” omnivector changes.

In contrast, writing the Hodge dual in the form B§I preserves subspace freedom and makes it unnecessary to choose a “preferred” omnivector. There could be other basis vectors you don’t know about and don’t need to know about, so long as they do not appear in B or in I.

In this subsection, we restrict attention to d=3. We choose our preferred unit omnivector in the obvious way, namely I=γ1γ2γ3. The order in which the basic vector appear here defines a notion of handedness.

The cross product X×Y is a pseudovector. It can be calculated in terms of the bivector X∧Y as follows:

where in equation 55b the operator §I is our representation for the Hodge dual, as defined in section 5.1. Meanwhile, equation 55c expresses exactly the same thing, using the more conventional unary prefix * notation.

For example, this establishes a correspondence between a rotation in the XY plane and a rotation around the Z axis. Two ways of thinking about the same thing.

Note that the norm is unchanged:

| (56) |

This gives us aa nice simple recipe: Everywhere you see a cross X×Y, product, cross it out and replace it with (A∧B)§. This makes the physics easier to understand. Rotationso in the XY plane are easier to keep track of compared to rotations around the Z axis. The equations of electromagnetism are vastly easier to understand when written in terms of bivectors.

Here’s a simple yet interesting and important example. Consider the triple scalar product, representing the volume of the parallelepiped spanned by three vectors. We can write that as:

| (57) |

The triple wedge product X∧Y∧C is an omnivector. The dual thereof is a pseudoscalar, badly behaved under reflection. For more about the volume of a parallelepiped, see reference 7.

There is an easy way to remember all this: As we said earlier, dot is a grade-flipped version of wedge, and vice versa. Dot lowers the grade, whereas wedge raises the grade. Hodge then dot is somewhat like wedge then Hodge, as in equation 57.

Again, the norm is unchanged:

| (58) |

Beware that the notion of "Hodge then dot" versus "dot then Hodge" only works for vectors. For example, if E and B are grade=1 vectors, then the Poynting vector is E×B which is equal to (E∧B)§, but not equal to (E§)·(B§).

In more advanced situations such as electrodynamics, you often find that there is an equation involving a cross product and a “similar” equation involving a dot product. In this case you may need to combine the two equations and replace both products with the full geometric product. This generally requires more than a recipe, i.e. it may require actually understanding what the equations mean. For electrodynamics in particular, the right answer involves promoting the equations from 3D to 4D, and replacing two vector fields by one bivector field. See reference 4.

Given a dirk D, calculating the Hodge dual is “almost” like taking the dot product with the omnivector I, but not quite. The dot product picks out all the right basis vectors, but it may introduce an unwanted sign. In Euclidean space, everything works smoothly if we first permute the omnivector to dictionary order and permute the dirk to reverse dictionary order. We count how many swaps it takes to do that, and fudge the sign accordingly. See reference 15.

Given an ominvector of grade D and a blade B of grade g, Hodge round trip §§ is equal to (−1)(g(D−g))s times B. Here s is the signature of the basis, i.e. 1 for Euclidean space and −1 for Minkowski space.

Therefore in Euclidean space, scalars, bivectors, and omnivectors are always unchanged by the round trip. Also whenever D is odd, everything is unchanged. It’s the odd grades in even dimensionalities that flip.

I prefer the wedge product for several reasons

First and foremost is the simplest of practical pedagogical reasons: I can get good results using wedge products. The more elementary the context, and the more unprepared the students, the more helpful wedge products are. I can visualize the wedge product, and I can get the students to visualize it. (This stands in stark contrast to the cross product, which tends to be very mysterious to students.)

Specifically: Consider gyroscopic precession. I have done the pedagogical experiment more times than I care to count. I have tried it both ways, using cross products (pseudovectors) and/or using wedge products (bivectors). Precession can be understood using little more than the addition of bivectors, adding them edge-to-edge as described in section 2.3. I can use my hands to represent the bivectors to be added, or (even better) I can show up with simple cardboard props.

Getting students to visualize angular momentum as a bivector in the plane of rotation takes no time at all. In contrast, getting them to visualize it as a pseudovector along the axis of rotation is a heavy lift; even a bright student is going to struggle with this, and the not-so-bright students are never going to get it.

Maybe this just means I’m doing a lousy job of explaining cross products, but even if that’s true, I’ll bet there are plenty of teachers out there who find themselves in the same situation and would benefit from taking the bivector approach.

Another reason for preferring the geometrical approach, i.e. the Clifford algebra approach, is that (compared to cross products) it does a much better job of modeling the symmetries of the real world.

Remember, the real world is what it is and does what it does. When we write down an equation, it may or may not be an apt model of the real world. Often an equation involving a cross product will have a chirality (“handedness”) to it, even when the real-world physics is not chiral. For more on this, see reference 3.

Additional reasons for preferring the geometric approach have to do with its connections to other mathematical and physical ideas, as discussed in section 1. (This is in contrast to the cross product, which is not nearly so extensible.)

There are always multiple ways of presenting the same material. In the case of Clifford algebra, it would have been possible to leap to the idea of a basis set very early on. The sequence would have been: (a) establish a few fundamental notions; (b) set forth the behavior of the basis vectors according to equation 41 and equation 42; (c) express all vectors, bivectors, etc. in terms of their components relative to this basis; and (d) derive the main results in terms of components. Let’s call this the basis+components approach.

Some students prefer the basis+components approach because it is what they are expecting. They think a vector, by definition, is nothing more than a list of components.

The alternative is to think of a vector as a thing unto itself, as an object with geometric properties, independent of any basis. Let’s call this the geometric approach.

We must ask the question, which approach is more elementary, and which approach is more sophisticated? Also, which approach is more abstract, and which approach is more closely tied to physical reality?

Actually those are trick questions; they look like dichotomies, but they really aren’t. The answer is that the geometric approach is more physical and more abstract. It is more elementary and more sophisticated.

| You can use a pointed stick as a physical model of a vector, independent of any basis. Wave it around in front of the class. | Show how vectors can be added by putting them tip-to-tail. |

| Use a flat piece of cardboard as a physical model of a bivector. | Show how bivectors can be added by putting them edge-to-edge. |

In physics, almost everything worth knowing can be expressed independently of any basis. You should be suspicious of anything that appears to depend on a particular chosen basis. For more about this, see reference 16. Observe that everything in section 2 is done without reference to any basis; the definition of “basis” and “components” are not even mentioned until the very end.

The real practical advantage of the basis+components approach is that it is well suited for numerical calculations, including computer programs. Reference 2 includes a program that is useful for keeping track of compound rotations in D=3 space.

Consider the contrast:

| The geometric approach is physical yet algebraic, axiomatic, and abstract; it is elementary yet sophisticated. | The basis+components approach is more numerical, i.e. more suited for computer programs. |

Some students have prior familiarity with one approach, or the other, or both, or neither.

By longstanding convention, the term “outer product” of two vectors (V and W) refers to the tensor product, namely

| (59) |

where T is a second-rank tensor.

If we expand things in terms of components, relative to some basis, we can write:

| (60) |

For example:

| (61) |

In general, T itself has no particular symmetry, but we can pick it apart into a symmetric piece plus an antisymmetric piece:

| (62) |

The second term in on the RHS of equation 62 is the wedge product V∧W. The Trace of the first term is the dot product.

Beware: Reference 8 introduces the term “antisymmetric outer product” to refer to the wedge product, and then shorthands it as “outer product”, which is highly ambiguous and nonstandard. It conflicts with the longstanding conventional definition of outer product, which consists of all of T, not just the antisymmetric part of T.

I wrote a “Clifford algebra desk calculator” program. It knows how to do addition, subtraction, dot product, wedge product, full geometric product, reverse, hodge dual, and so forth. Most of the features work in arbitrarily many dimensions.

Here is the program’s help message. See also reference 14.

Desk calculator for Clifford algebra in arbitrarily many

Euclidean dimensions. (No Minkowski space yet; sorry.)

Usage: ./cliffer [options]

Command-line options include

-h print this message (and exit immediately).

-v increase verbosity.

-i fn take input from file 'fn'.

-pre fn take preliminary input from file 'fn'.

-- take input from STDIN

If no input files are specified with -i or --, the default is an

implicit '--'. Note that -i and -pre can be used multiple times.

All -pre files are processed before any -i files.

Advanced usage: If you want to make an input file into a

self-executing script, you can use "#! /path/to/cliffer -i" as

the first line. Similarly, if you want to do some

initialization and then read from standard input, you can use

"#! /path/to/cliffer -pre" as the first line.

Ordinary usage example:

# compound rotation: two 90 degree rotations

# makes a 120 degree rotation about the 1,1,1 diagonal:

echo -e "1 0 0 90° vrml 0 0 1 90° vrml mul @v" | cliffer

Result:

0.57735 0.57735 0.57735 2.09440 = 120.0000°

Explanation:

*) Push a rotation operator onto the stack, by

giving four numbers in VRML format

X Y Z theta

followed by the "vrml" keyword.

*) Push another rotation operator onto the stack,

in the same way.

*) Multiply them together using the "mul" keyword.

*) Pop the result and print it in VRML format using

the "@v" keyword

On input, we expect all angles to be in radians. You can convert

from degrees to radians using the "deg" operator, which can be

abbreviated to "°" (the degree symbol). Hint: Alt-0 on some

keyboards.

As a special case, on input, a number with suffix "d" (with no

spaces between the number and the "d") is converted from degrees to

radians.

echo "90° sin @" | cliffer

echo "90 ° sin @" | cliffer

echo "90d sin @" | cliffer

are each equivalent to

echo "pi 2 div sin @" | cliffer

Input words can be spread across as many lines (or as few) as you

wish. If input is from an interactive terminal, any error causes

the rest of the current line to be thrown away, but the program does

not exit. In the non-interactive case, any error causes the program

to exit.

On input, a comma or tab is equivalent to a space. Multiple spaces

are equivalent to a single space.

Note on VRML format: X Y Z theta

[X Y Z] is a vector specifying the axis of rotation,

and theta specifies the amount of rotation around that axis.

VRML requires [X Y Z] to be normalized as a unit vector,

but we are more tolerant; we will normalize it for you.

VRML requires theta to be measured in radians.

Also note that on input, the VRML operator accepts either four

numbers, or one 3-component vector plus one scalar, as in the

following example.

Same as previous example, with more output:

echo -e "[1 0 0] 90° vrml dup @v dup @m

[0 0 1] -90° vrml rev mul dup @v @m" | cliffer

Result:

1.00000 0.00000 0.00000 1.57080 = 90.0000°

[ 1.00000 0.00000 0.00000 ]

[ 0.00000 0.00000 -1.00000 ]

[ 0.00000 1.00000 0.00000 ]

0.57735 0.57735 0.57735 2.09440 = 120.0000°

[ 0.00000 0.00000 1.00000 ]

[ 1.00000 0.00000 0.00000 ]

[ 0.00000 1.00000 0.00000 ]

Even fancier: Multiply two vectors to create a bivector,

then use that to crank a vector:

echo -e "[ 1 0 0 ] [ 1 1 0 ] mul normalize [ 0 1 0 ] crank @" \

| ./cliffer

Result:

[-1, 0, 0]

Another example: Calculate the angle between two vectors:

echo -e "[ -1 0 0 ] [ 1 1 0 ] mul normalize rangle @a" | ./cliffer

Result:

2.35619 = 135.0000°

Example: Powers: Exponentiate a quaternion. Find rotor that rotates

only half as much:

echo -e "[ 1 0 0 ] [ 0 1 0 ] mul 2 mul dup rangle @a " \

" .5 pow dup rangle @a @" | ./cliffer

Result:

1.57080 = 90.0000°

0.78540 = 45.0000°

1 + [0, 0, 1]§

Example: Take the 4th root using pow, then take the fourth

power using direct multiplication of quaternions:

echo "[ 1 0 0 ] [ 0 1 0 ] mul dup @v

.25 pow dup @v dup mul dup mul @v" | ./cliffer

Result

0.00000 0.00000 1.00000 3.14159 = 180.0000°

0.00000 0.00000 1.00000 0.78540 = 45.0000°

0.00000 0.00000 1.00000 3.14159 = 180.0000°

More systematic testing:

./cliffer.test1

The following operators have been implemented:

help help message

listops list all operators

=== Unary operators

pop remove top item from stack

neg negate: multiply by -1

deg convert number from radians to degrees

dup duplicate top item on stack

gorm gorm i.e. scalar part of V~ V

norm norm i.e. sqrt(gorm}

normalize divide top item by its norm

rev clifford '~' operator, reverse basis vectors

hodge hodge dual aka unary '§' operator; alt-' on some keyboards

gradesel given C and s, find the grade-s part of C

rangle calculate rotor angle

=== Binary operators

exch exchange top two items on stack

codot multiply corresponding components, then sum

add add top two items on stack

sub sub top two items on stack

mul multiply top two items on stack (in subspace if possible)

cmul promote A and B to clifs, then multiply them

div divide clif A by scalar B

dot promote A and B to clifs, then take dot product

wedge promote A and B to clifs, then take wedge product

cross the hodge of the wedge (familiar as cross product in 3D)

crank calculate R~ V R

pow calculate Nth power of scalar or quat

sqrt calculate square root of power of scalar or quat

=== Constructors

[ mark the beginning of a vector

] construct vector by popping to mark

unpack unpack a vector, quat, or clif; push its contents (normal order)

dimset project object onto N-dimensional Clifford algebra

unbave top unit basis vector in N dimensions

ups unit pseudo-scalar in N dimensions

pi push pi onto the stack

vrml construct a quaternion from VRML representation x,y,z,theta

clif take a vector in D=2**n, construct a clif in D=n

Note: You can do the opposite via '[ exch unpack ]'

=== Printout operators

setbasis set basis mode, 0=abcdef 1=xyzabc

dump show everything on stack, leave it unchanged

@ compactly show item of any type, D=3 (then remove it)

@m show quaternion, formatted as a rotation matrix (then remove it)

@v show quaternion, formatted in VRML style (then remove it)

@a show angle, formatted in radian and degrees (then remove it)

@x print clif of any grade, row by row

=== Math library functions:

sin cos tan sec csc cot

sinh cosh tanh asin acos atan

asinh acosh atanh ln log2 log10

exp atan2

|